AI Security: How to Give Data Access Without Exposing Secrets

Every IT Manager's Nightmare: Your Secrets, at Your Competitors

You've probably heard the famous and frightening story that circulated on LinkedIn and in the media: a software engineer at a major tech company (like Samsung or Amazon, for example) just wanted to streamline their work. They uploaded a piece of proprietary code related to future chip development, or perhaps a sensitive board meeting protocol, to ChatGPT and asked the bot with complete innocence: "summarize this for me," "find the bug," or "draft a reply email."

What they didn't know was that at that moment they sent the company's most closely guarded trade secret to public servers in the United States. The next day, that information was processed and became (theoretically) part of the system's knowledge base, and could potentially appear in responses to other users — including their direct competitors who asked similar questions.

This is the biggest fear (and rightfully so) of CEOs, CFOs, lawyers, and B2B organizations considering adopting AI technologies: "If I connect the bot to my CRM, will OpenAI or Google read my customer list? Will my financial data leak? Am I risking my patents?"

The short answer is: it depends very much on how you do it. If your employees are using the free version of ChatGPT in a pirated and partisan way — you have a serious data security problem. But if you're building an enterprise solution managed through an API (programming interface) — you can be completely safe. Let's understand this critical difference in depth.

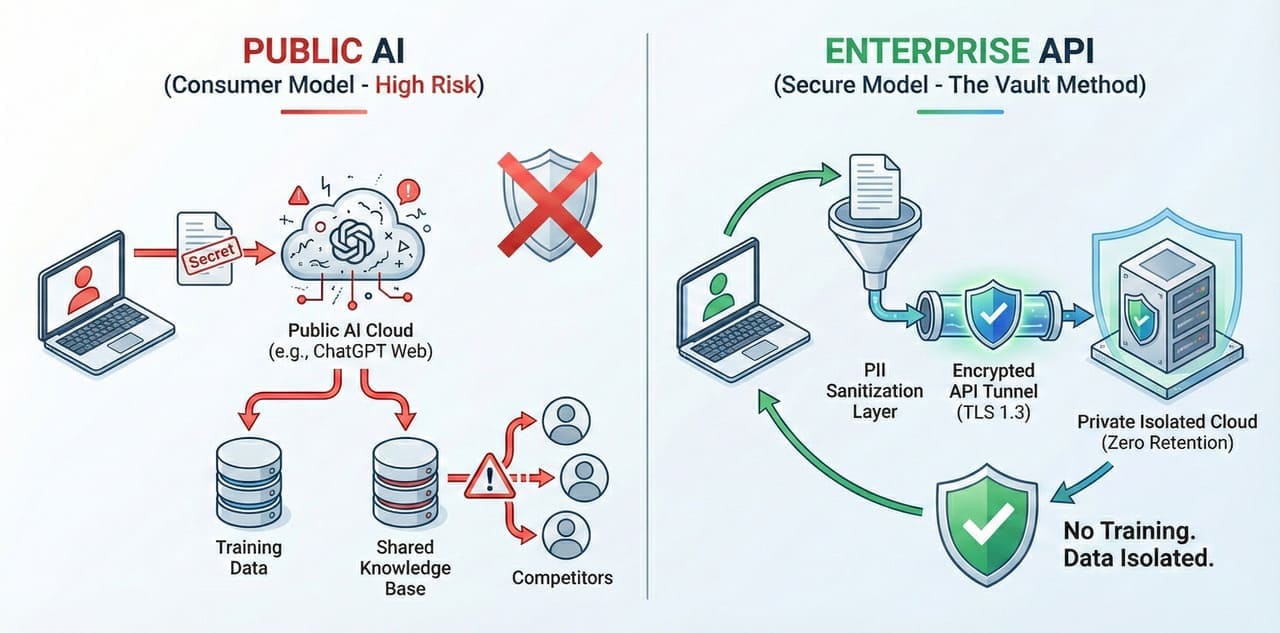

The Difference Between "Public AI" and "Enterprise AI"

To understand data security in 2026 and manage risks correctly, you must distinguish between two completely separate worlds:

1. The Consumer Model (Public)

This is what most people know (ChatGPT, Claude.ai, Gemini in the web version). You go to the website, write a question, and the information is stored on the servers. The business model of these companies is partly based on your information helping to train and improve future models (Training Data).

- Security level: Relatively low by corporate standards.

- Privacy: The information is used for model training.

- Who it's suitable for: Writing birthday greetings, recipes, travel ideas, general questions. Absolutely forbidden to use for analyzing financial reports, legal contracts, or medical information.

2. The Enterprise Model (API Enterprise Model)

When we at Whale Group build you a Consultant Agent or any other solution, we do not use the open website. We use a direct, private, and encrypted communication "pipe" to the servers (API). According to the legally binding Terms of Service of the major AI providers (OpenAI Enterprise, Google Vertex AI, Azure OpenAI) when using via API:

- Zero Data Retention: Your information is not stored on the provider's servers after processing is complete.

- No Training: Your information is not used to train the company's future model. It's yours and yours alone.

- Complete Separation (Isolation): The information passes, is processed, and is returned to you. It doesn't enter the AI's "general brain" and doesn't leak out to other users.

Want to consult with us?

We can help you choose, build and deploy the perfect AI solution for your business. Leave your details and we'll get back to you.

Our Security Architecture: "The Vault Method"

At Whale Group's Tech Consulting, we don't take unnecessary risks. We implement multi-layered security architecture (Defense in Depth) to protect your assets and ensure compliance with strict standards (GDPR, SOC2). We call it "The Vault Method":

- The Sanitization Layer (PII Redaction): Before the customer's question even reaches the AI, it passes through a smart filtering algorithm (Redactor) that automatically deletes sensitive identifying details (PII — Personally Identifiable Information) like credit card numbers, ID numbers, private addresses, or passwords, if they're not necessary for the answer. This way, sensitive information stays on your server and never goes out to the world.

- Limited and Smart Context (RAG — Retrieval Augmented Generation): The bot doesn't "download the entire CRM" or the entire database. It works surgically. It gets point access ("Read Only") only to the specific piece of information relevant to that specific question at that moment. For example, only to the current customer's order status, not to all orders in the company. This significantly minimizes the attack surface.

- End-to-End Encryption: All communication, from your website to the server and back, is encrypted to TLS 1.3 standard (the same encryption standard used by banks, financial institutions, and security agencies). Even if someone intercepts the communication along the way, they'll see only a meaningless sequence of characters ("gibberish").

The Real Danger Is Not AI, But "Shadow AI"

Research shows that the biggest risk to your organization today is not organized AI implementation, but the opposite — ignoring it. While you're deliberating and waiting, your employees are already using AI "under the radar" to ease their workload. They're copying Excel tables to unsecured free bots, using unauthorized tools, and that's exactly where the big leaks happen. This phenomenon is called "Shadow AI". It happens because they want to be efficient, but they don't have the tools.

The only effective way to prevent this is not to ban AI (because it's impossible to enforce), but to provide employees with a safe, approved, and managed alternative.

Tip for Managers: Training Is Your Most Powerful Tool

Technology isn't enough. We always recommend to our clients to conduct quarterly employee training on "AI hygiene." Explain what's allowed and what's not, what information can be put in a bot and what must stay on the local computer. Employee awareness is the best firewall.

Frequently Asked Questions (FAQ)

Q: Can I install the model on my own server (On-Premise)? A: Yes. For enterprise organizations, we offer installation of local models (like Llama 3) that run physically on your servers and don't go to the internet at all.

Q: Will my employees know how to use the security tools? A: It's transparent to them. The protection mechanisms run in the background. The employee asks a question, the protections work, and they receive an answer. No technical knowledge required.

Q: Will OpenAI use my data for training? A: Absolutely not. In our Enterprise contract, there is a legal commitment that your data will not be used and that complete separation (Siloing) will be maintained.

Q: What happens in case of a cyberattack? A: We use the world's strongest cloud infrastructures (AWS/Google Cloud) with advanced protections (WAF, DDoS Protection) that ensure resilience and availability even under attack.

Q: Do I have a backup of conversations? A: Yes, all conversations are stored in encrypted logs on the organization's side (if you choose) for control and lesson-learning purposes, so you can always go back and see what was said.

Q: Do you comply with European GDPR standards? A: Absolutely. All our systems are built to strict privacy standards (Privacy by Design), including "right to be forgotten" mechanisms and data deletion upon user request.

Security Builds Trust — Summary

In summary, data security is not a place to cut corners. When you choose a real enterprise AI solution, you're not only protecting yourself — you're signaling to your customers that you're serious, professional, and respect their privacy. This is the foundation of every business relationship in the new era.

In an era where everyone is concerned about their privacy and learns about cyber breaches in the news every other day, your ability to tell customers and investors: "Your information is protected with us to the strictest security standards of 2026" is not just a technical requirement — it's a massive marketing competitive advantage. It builds trust, and trust equals sales.

Don't let fear paralyze you. Data security is not a barrier to innovation — it's the strong foundation that enables it.

Michael Romm

Michael is a co-founder of Whale Group, leading business and marketing strategy. An expert in data (SQL, Python) and developing automation and AI solutions for businesses.